This section of the K-OAr Center’s site is a porting of some content from the Speech Data & Tech website, formerly at https://speechandtech.eu/ (you can find its latest Internet Archive copy here).

Technologies and tools for speech data

What kind of technologies and tools can be applied when working with speech data?

Humanities scholars in general are well versed with hermeneutic analysis, but sometimes lack the knowledge that is required to understand the technologies and tools that have been developed to digitally process speech data. This understanding is also hampered by the frequent use of abbreviations and jargon in computer science.

To bridge this gap, this section offers descriptions of various technologies and digital tools that apply these technologies. The pages relate to all kinds of techniques that a humanities scholar might want to use when working with spoken data. Per technique, we provide two sections. The first section gives a description of the generic technology. In the second section, we list one or multiple specific tools in which these technologies are made available for research. Additionally, a glossary is provided with an explanation of the most frequently used terms and abbreviations in technologies related to speech and language.

Any machine processing of human written or spoken language requires specific techniques and tools. Broadly, it consists of a sequence of tasks: Recording, Recognition, Analysis.

During recording, speech and any kind of written analog data, must be digitised with sufficient fidelity. Thus, speech is recorded using one of the many audio formats with their specific technological parameters, and written language is recorded as images obtained via scanning.

Recognition is the task of converting raw digital data into symbols. It is thus a categorisation process: in optical character recognition (OCR), regions of an image are associated with character symbols, and likewise in automatic speech recognition (ASR), fragments of speech are associated with character symbols. Note that there are many types of recognition: speaker diarization aims to determine who is speaking, emotion recognition aims to extract information about a speaker’s emotional state.

The performance of automatic recognition systems is given as an error rate: the difference between an ideal and a given recognition can be calculated. In general, human performance is considered as the gold standard, meaning that machine processing is compared against human performance.

Analysis is any higher processing of speech and language – one tries to associate meaning to the symbolic representation. In general, it integrates the results of the recognition process with knowledge-based representations. As an example, an automatic speech recognition system commonly returns a sequence of candidate words; an analysis of the syntactic structure in which the candidates occur allows a selection of the optimal word.

Automatic Speech Recognition

Automatic Speech Recognition (ASR) is a sophisticated software that facilitates the conversion of recorded speech into written text, a process somewhat analogous to Optical Character Recognition (OCR), which translates text images into machine-readable characters.

From 2000 to 2018, ASR was largely propelled by classical Machine Learning technologies such as Hidden Markov Models. These models, despite their historical dominance, began to stagnate in accuracy, paving the way for innovative approaches driven by cutting-edge Deep Learning technology.

The advent of potent generative AI has brought about a seismic shift in this field. Presently, in 2023, the superior ASR models operate on an End-to-End (E2E) basis, where audio is transmuted directly into text in a single step. Noteworthy examples include the Wav2Vec-2 model by Facebook/Meta, unveiled in 2019, and the more recent Whisper by OpenAI, launched in September 2022. The latter, in particular, has shown exceptional performance by demonstrating the capacity to recognize approximately 100 different languages using a singular model.

Legacy speech recognition technologies, such as Kaldi, are progressively becoming obsolete. Although they may still have niche applications where their performance is acceptable, the unprecedented superiority of models like Wav2Vec-2 and Whisper negates the necessity to further invest in these dated ASR models.

ASR Tools Overview

Automated Speech Recognition (ASR) can be accomplished through a desktop application on your personal computer, be it Windows, Linux, or OSX, or via an online service. Both approaches present their unique set of advantages and drawbacks.

Online services for ASR are user-friendly and often demand minimal computational power. However, privacy concerns are associated with these services. Most of these platforms rely on cloud-based solutions, implying that your recorded conversations are temporarily stored on an external server. Furthermore, in some instances, by opting for a cloud service, you may inadvertently consent to the utilization of your recordings for their internal research objectives, posing significant privacy issues. Thus, it is essential to thoroughly peruse the terms and conditions of any ASR service before deciding if its privacy measures align with your requirements.

For highly sensitive data where trust in online services is questionable, running an ASR application on your own computer grants you full authority over the entire process. Your data won’t be transferred elsewhere for processing, and the software can operate without an internet connection, if desired. However, this approach does present challenges; local ASR processing is often more intricate and demands a computer with adequate processing power. While numerous online ASR services are compatible with a basic laptop optimized for web browsing, such as a Chromebook, the same cannot be said for installable services.

In the following section, we will highlight several notable ASR services. The first will be the Transcription Portal. A significant portion of our team has contributed substantially to the development of this online ASR tool. Other independent options will be discussed subsequently.

Whisper, a new ASR engine

Whisper is an automatic speech recognition (ASR) system trained on 680,000 hours of multilingual and multitask supervised data collected from the web. The developer of Whisper, OpenAI, shows that the use of such a large and diverse dataset leads to improved robustness to accents, background noise and technical language. Moreover, it enables transcription in multiple languages, as well as translation from those languages into English.

Privacy Concerns

Privacy becomes a paramount consideration, particularly when dealing with Audio-Visual (AV) recordings. This concern is further magnified when handling “sensitive” recordings, necessitating careful and responsible handling by the interviewer or the owner of the recordings. Whisper is highly versatile in terms of its operational capacities, capable of functioning on a high-performance server, a personal laptop, or any device in-between. It delivers consistent recognition results across different platforms, with the processing speed of Whisper being the primary variable depending on your computer’s capabilities. The more powerful your device is, especially if equipped with a graphics card, the faster the recognition. Therefore, for those dealing with sensitive data and owning a fast computer, we strongly recommend installing it on your system to minimize the risk of data breaches.

Is ASR Fully Developed?

As of now, in June 2023, the answer is no. Whisper still has some areas for improvement. Drawbacks include the absence of diarization (identifying the speaker) and occasional over-simplification of transcriptions. For example, hesitant speech such as “I um I, I thought I’d do that for a moment” is typically recognized by Whisper as “I thought I’d do that for a moment”.

This behavior likely results from Whisper’s use of a ChatGPT-like language model to convert recognized speech into fluid sentences. While this feature is generally effective for transcribing most speech, it may not always be ideal for research focusing on speech or dialogues where the study of hesitations, pauses, repetitions, and other disfluencies is key.

Transcription Portal

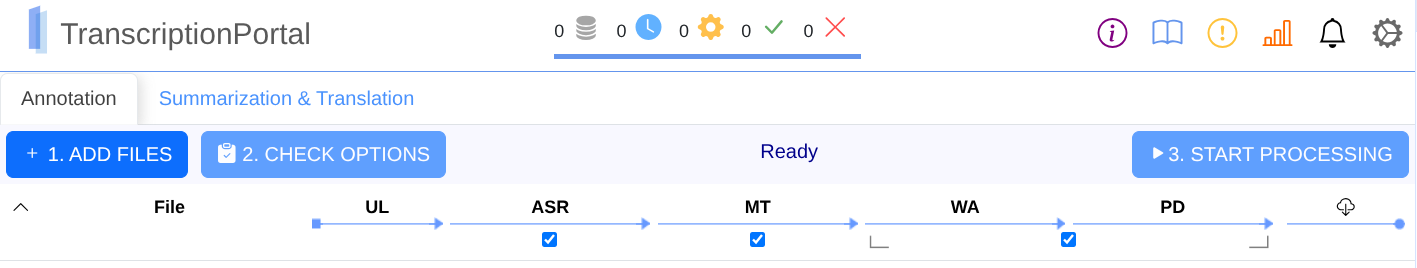

To facilitate scholars with their audio transcriptions, CLARIN ERIC supported an initiative to build a TranscriptionPortal where scholars can upload audio files, select the spoken language and eventually a Language Model, and proces the files. Once the processing is done, the results can be downloaded. Click on the image or button below to go to the TranscriptionPortal. Do note that an academic login is required for use of this tool.

Dataflow

In september 2018 the first version of the CLARIN TranscripionPortal was realised. The portal, currently located at the LMU in München, collects the audio files and sent them to the ASR-engines in the different counties. So, a Dutch audio file from a scholar in Stockholm, is send to München and from there, once Dutch is chosen as the language, sent to Nijmegen in the Netjherlands where the audio is recognised. The results are resend to München and from there back to Stockholm.

The assumption is that for all European languages there should be a speech recognizer available somewhere in Europe. Yet, this is not the case, so an attempt is being made to use available commercial recognisers for the missing languages. However, it cannot be guaranteed that they treat the data according to the GDPR. So, in the CLARIN context, an attempt is being made to make a good recognizer available for as many languages as possible in the long term.

Workflow

Go to the TranscriptionPortal website, select one or more sound files, upload them, select the language and eventually a Language Model, start the recognition and once ready, download the automatically generated transcription(s). Currently, the audio-files must be formatted as mono or stereo wav-files, but in the near future the portal itself will transform a range of audio-file formats into the required (wav-) format.

In case of stereo, the portal asks the users whether they want to process both audio-channels separately or together (i.e. recoded to one mono signal).

If choosen for “separately”, both channels are done one after the other so that when you have recorded the interview with 2 speakers each on a single channel, it is easier to separate the different speakers, determine turn-takings, and (sometimes) get a better recognition result.

Once ready, the results can be downloaded and processed according the whishes of the scholars.